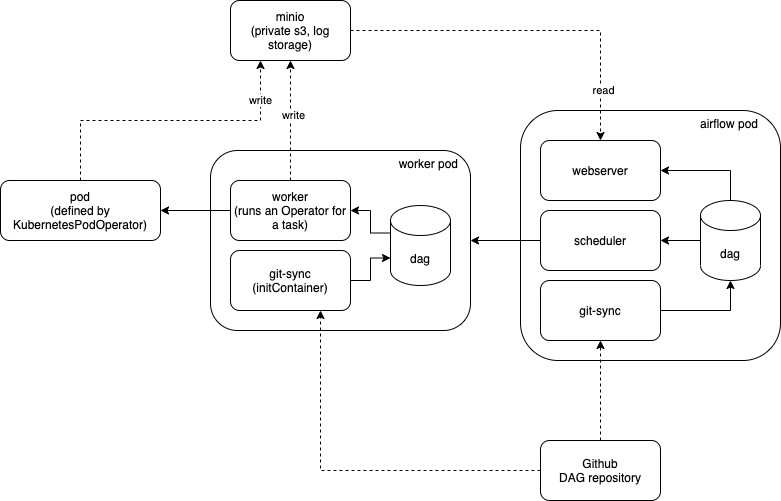

In this and the following sections, we’ll define the necessary Kubernetes objects to run theįirst, we’ll define a Deployment and a Service to run a PostgreSQL instance that Airflow įinally, having the mount running, pods will be able to mount the cluster node’s folder where they’llīe able to read and write files which will be written into our host machine. □ NOTE: This process must stay alive for the mount to be accessible. To achieve this, we need to create a PersistenVolumeClaim object: If we decide to not setĪ volume, then Airflow’s workers’ logs would be lost after they finish. In this guide, we’llĭefine a Volume that will allow us to persist logs from all Airflow’s components. There are multiple alternatives to save Airflow’s logs on a Kubernetes deployment. Kubernetes pod) we’re going to set up three volumes for different purposes using multiple Kubernetes tools: This will allow workers to load env vars from this ConfigMap when running.įor each Airflow component (i.e. AIRFLOW_KUBERNETES_ENV_FROM_CONFIGMAP_REF: this specifies the name of the ConfigMap that stores theĮnv vars (i.e.We’ll talk about this in more detail later. AIRFLOW_KUBERNETES_LOGS_VOLUME_CLAIM: this env var specifies the Kubernetes volume claim to use to.cluster node) where DAGs files are stored. AIRFLOW_KUBERNETES_DAGS_VOLUME_HOST: we’ll see this in more detail later.AIRFLOW_KUBERNETES_WORKER_CONTAINER_TAG: this env var is used to specify the docker image tag.

In the context of Kubernetes, workers will be run on a Pod. As the name suggests, this env var is to specify theĭocker image to be used for workers. Specifically for Kubernetes integration on Airflow. AIRFLOW_KUBERNETES_WORKER_CONTAINER_REPOSITORY: all env vars with the prefix AIRFLOW_KUBERNETES_ are.To parse the configuration file and this value was causing some issues because of the doubleīrackets in the configuration value in the docker image. AIRFLOW_KUBERNETES_KUBE_CLIENT_REQUEST_ARGS: when developing this guide, I found that Airflow failed.Feel free to disable it if you don’t want to see or use default DAGs.

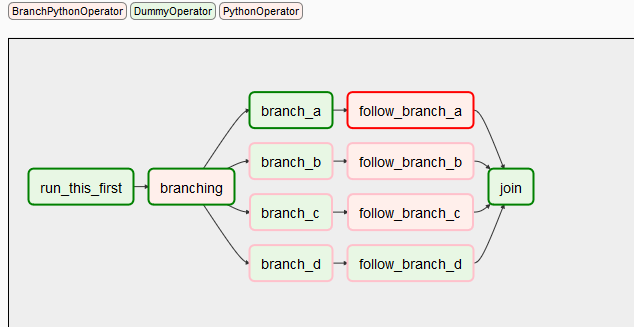

LOAD_EX: this env var is used to load Airflow’s example DAGs.POSTGRES_: these env vars are needed since our deployment needs a Postgres server running to which our Airflow components will connect to store information about DAGs and Airflow such as connections, variables and DAGs’ information such as tasks’ state.To set the Airflow executor configuration. The docker image entrypoint script uses this env var

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed